Object Detection, Tracking and Analysis in Video: A Comparative Study of CNN and YOLOv8

Download PDFThis paper presents a comparative study of two deep learning approaches for real-time object detection in surveillance video: Faster R-CNN with ResNet-101 backbone (two-stage) and YOLOv8 with CSPDarknet backbone (single-stage). Both models were evaluated on a custom classroom surveillance dataset from Thanh Do University under varying illumination conditions. YOLOv8 achieves 85.71% detection accuracy versus 71.43% for CNN, with approximately 56x faster inference.

Introduction

Object detection in video underpins security surveillance, intelligent transportation, and agricultural monitoring. Challenges include object motion, occlusion, scale variation, and dynamic illumination.

Historical methods — Haar cascades, HOG+SVM, Deformable Parts Models — offered limited representational capacity and poor generalization.

Two paradigms emerged from deep learning:

- Two-stage (R-CNN family): region proposals then classification — high accuracy, slow

- Single-stage (YOLO, SSD): single forward pass — fast, real-time capable

Theoretical Background

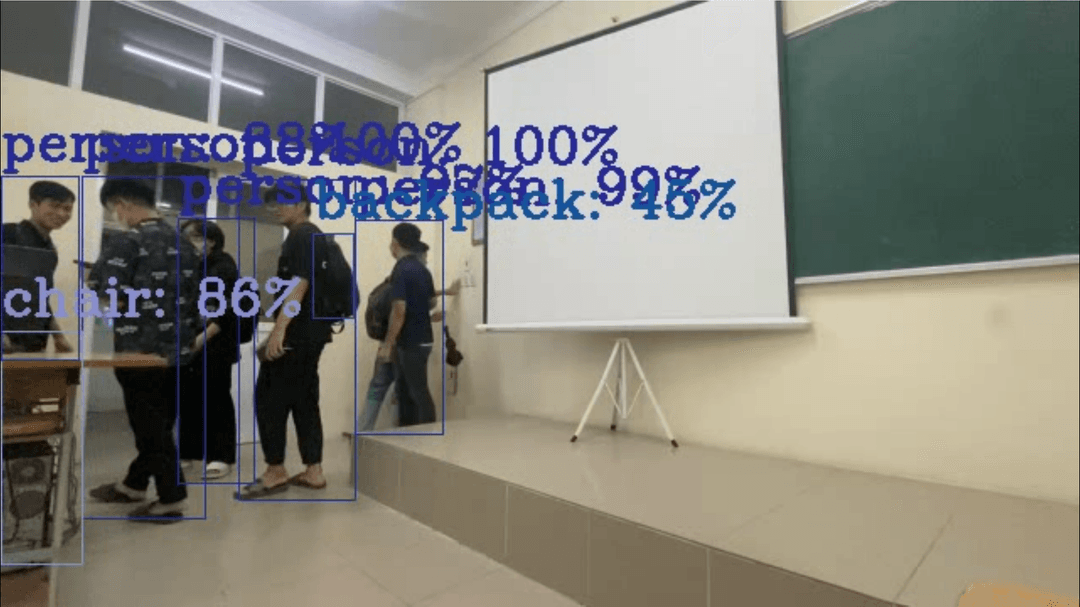

Faster R-CNN

ResNet-101 backbone, RPN with 9 anchors/position (3 scales x 3 ratios), NMS at IoU 0.7, RoI Pooling to classification head. Loss: cross-entropy + smooth-L1 regression.

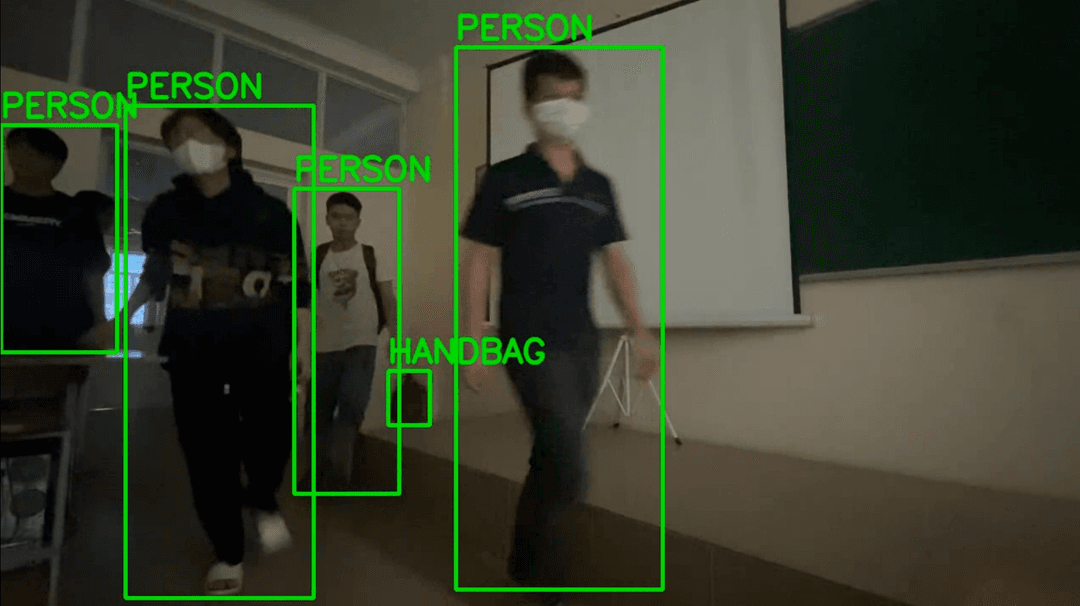

YOLOv8

CSPDarknet backbone extracting features at P3/P4/P5 (strides 8/16/32), CSP-PAN neck for bidirectional feature fusion, decoupled head with separate classification + regression branches. Loss: CIoU + BCE + DFL.

Computational Complexity

| Metric | Faster R-CNN | YOLOv8n |

|---|---|---|

| Parameters | 60.1M | 3.2M |

| FLOPs (640x640) | ~134 GFLOPs | ~8.7 GFLOPs |

| Model size | ~240 MB | ~6.3 MB |

| GPU inference | ~200 ms | ~3.6 ms |

| CPU inference | ~2000 ms | ~80 ms |

| Throughput | ~5 FPS | ~280 FPS |

Experimental Setup

Dataset: 5 classroom surveillance videos captured with iPhone 11 Pro Max at 1080p 30FPS, totaling approximately 40,000 frames. Three scenarios: students entering, packing up, exiting. Annotations via CVAT.ai (person class only).

Dataset Split

| Split | Videos | Frames (approx.) | Percentage |

|---|---|---|---|

| Training | 3 | 24,000 | 80% |

| Validation | 0.5 | 4,000 | 10% |

| Test | 1 | 8,000 | 10% |

| Total | 5 | ~40,000 | 100% |

YOLOv8 Augmentation

- Mosaic: 4-image combination

- MixUp: pixel interpolation, Beta distribution

- CutMix: patch replacement encouraging full-image attention

Results

Detection Performance

| Metric | CNN (Faster R-CNN) | YOLOv8 |

|---|---|---|

| True Positives | 5 | 6 |

| False Positives | 0 | 0 |

| False Negatives | 2 | 1 |

| Accuracy | 71.43% | 85.71% |

| Precision | 100.0% | 100.0% |

| Recall | 71.43% | 85.71% |

| F1-Score | 83.33% | 92.31% |

Illumination Robustness

| Condition | CNN | YOLOv8 | Delta |

|---|---|---|---|

| Normal lighting | 71.43% | 85.71% | +14.28% |

| Low-light | ~57%* | ~80%* | +23%* |

| Degradation | ~14% | ~6% | — |

*Estimated from qualitative frame-by-frame analysis.

Inference Speed

| Metric | Faster R-CNN | YOLOv8 | Speedup |

|---|---|---|---|

| GPU inference/frame | ~200 ms | ~3.6 ms | ~56x |

| GPU FPS | ~5 | ~280 | 56x |

| CPU FPS | ~0.5 | ~12 | 24x |

| Real-time capable | No | Yes | — |

Augmentation Ablation

| Configuration | Accuracy | Delta |

|---|---|---|

| Full (Mosaic + MixUp + CutMix) | 85.71% | baseline |

| No Mosaic | 78.57% | -7.14% |

| No MixUp | 82.14% | -3.57% |

| No CutMix | 83.93% | -1.78% |

| No augmentation | 71.43% | -14.28% |

Conclusion

Principal Findings

- YOLOv8: 85.71% accuracy vs 71.43% CNN (+14.28pp), F1 92.31% vs 83.33%

- Low-light: YOLOv8 maintains ~80% vs CNN ~57% (gap widens to ~23pp)

- Efficiency: 56x faster GPU inference, 19x fewer parameters, 15x fewer FLOPs

- Augmentation accounts for most of YOLOv8's advantage — without it, accuracy drops to CNN baseline (71.43%)

Future Directions

Vision Transformers (DETR, RT-DETR) for occluded objects, hybrid CNN-YOLO pipelines, DeepSORT/ByteTrack multi-object tracking integration, edge deployment (INT8 quantization, Jetson Nano, Raspberry Pi), unsupervised domain adaptation for nighttime/infrared scenarios.